"No Holding Back FileMaker Blogging"

|

Navigation:

|

Support this site by clicking on a sponsor below or becoming a patron!

Tips & Tricks Videos

Become a patron of this FREE web site!

|

|

Recent Blogs:

|

Window Locking

|

Everything Changes

|

Subsummary Unique Count

|

Scripted Change Log

|

Abstracted Log

|

|

Meta-Consulting

Meta-consulting is a service Database Pros offers to help other developers

over programming hurdles via GoToMeeting. Email John Mark Osborne or call John Mark at (909) 393-4664 to find out more about this service.

|

Beginner, Intermediate and Advanced Video Training

|

Quick Tip:

|

Freeze Window

The Freeze Window script step can speed up looping scripts by as much as 10 times. For instance, in a script that loops through records, each record refreshes the screen. Over hundreds or thousands of records, this can add up and slow down the processing of your script. Using the Freeze Window step once before entering the loop will eliminate the redraw and speed up the loop significantly. |

|

|

$10.00 Beginner Video Training

|

Fun Stuff:

|

FileMaker, Inc.

FileMaker, Inc. or FMI is a wholly owned subsidiary of Apple, Inc. It was once named Claris when it also sold MacWrite, MacPaint, Claris Emailer, ClarisWorks, etc. but changed it's name in 1998 when it focused on FileMaker. FileMaker started out as a DOS application called Nutshell but was shaped into the powerhouse it is today by Claris and FMI. |

|

|

Tips & Tricks Videos

|

RSS Feed

|

|

|

|

Abstracted Log

|

Level: Advanced

Version: FileMaker 19

Category: Calculations

Tuesday, May 11, 2021 |

|

|

|

|

|

|

A long, long time ago, in a far away land, Bob Cusick, of Clickware, sent me a tip file that used a single field to log changes to fields in a table. Through the years I modified it and released it many times but here’s the latest version. This version is so abstracted, all you need is a single custom function with parameters that specify the fields that trigger the log and the log field itself. That means you can copy and paste the formula from table to table and make minor changes to create logs in all your files.

BTW: Bob Cusick is no longer in the FileMaker market so don’t expect much FileMaker stuff if you visit his Clickware web site. On my last visit, there was just a single web page with a reference to an email address asking if you want to hire him.

The Evolution

My obsession with tracking changes at the field level started back when I saw a technique demonstrating how to track the date, time and account when a field was modified. It's always been possible to track at a record level, with the built-in Auto-Enter features, but at the field level is a whole different story. If you are interested, you can still see the example file at Field Mod.

Tracking all the fields you want, in a single log field, is quite a bit more complicated. When the single field log file was first sent to me by Bob, it wasn't abstracted at all. In fact, FileMaker 3.0 (.fp3 file format) didn't really have the ability to abstract the technique simply because there were some features missing like the Self function, the Get(ActiveFieldContents) function, Custom Functions and the ability to Auto-Enter and replace the current content of the field.

FYI: Back in the FileMaker 3.0 days, Get functions were called Status functions. Instead of Get(ActiveFieldName), you had Status(CurrentFieldName).

Back then, the philosophy was that FileMaker had the tools to get the job done, even if it was a bit of a hack. I'm quite fond of my time spent in the early days of FileMaker cause it taught me so much about the way of FileMaker. So, let me show some of the techniques that were used prior to modern day FileMaker. This will help you understand how things worked in the old days and therefore understand why the abstracted log at the end of this article works way it does today.

Let's start with the main formula that's stored in a calculation field titled "Current Changes":

"- DATE: " &

GetAsText(Get(CurrentDate)) &

", " &

"TIME: " &

GetAsText(Get(CurrentTime)) &

", " &

"FIELD: " &

Get(ActiveFieldName) &

", " &

"DATA: " &

Case(Name ≠ Name Old; Name Old & " -> " & Name;

Address ≠ Address Old;Address Old & " -> " & Address;

City ≠ City Old; City Old & " -> " & City;

State ≠ State Old; State Old & " -> " & State;

Zip ≠ Zip Old; Zip Old & " -> " & Zip;

"")

& "¶" & Log

BTW: It's also important to note that the "Current Changes" calculation field is unstored. There's no need to store this data since the actual log is stored in the "Log" field, as you will see below. In fact, storing the calculation result will cause the technique to stop functioning properly.

This formula would produce a result something like the following:

- DATE: 1/25/2021, TIME: 12:17:04, FIELD: Address, DATA: 2242 Avy Avenue #7 -&rt; 2242 Avy Avenue #7a

- DATE: 1/22/2021, TIME: 12:17:04, FIELD: City, DATA: Menlo Park -&rt; Chino Hills

- DATE: 12/25/2020 TIME: 12:17:04, FIELD: Zip, DATA: 94025 -&rt; 91709

The job of this formula is to track all the changes. The beginning is pretty easy, simply gathering the current date, time and active field name. As mentioned above, back in the old days the Get(ActiveFieldName), or rather Status(CurrentFieldName), did NOT exist. Therefore, the part with the Case statement needs some explaining.

The Case statement tests to see which field is modified so all fields that need to be tracked have to be included in the conditional. The fields in the example above include Name, Address, City, State and Zip. There are also counterparts for each of these fields that store the old version of the data. This is important for two reasons. First, the data can be used to show what the data changed from, not just to. But, more importantly, the "old" data allows us to detect what field actually changed.

FYI: The Auto-Enter calculation fires upon exiting a modified field that is referenced in the Auto-Enter calculation. The trigger field(s) must be in the same table per the dependency tree. See the Podcast, The Calculation Dependency Tree, for more information.

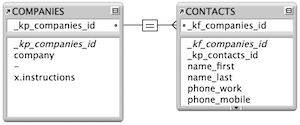

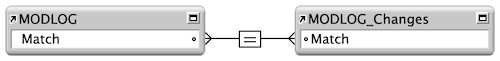

Determining what fields changed was done using Lookups because, again, there was no Auto-Enter option with the "do not replace existing value of field (if any)" until FileMaker 7. A calculated key and a self-join relationship populated the "old" fields as well as another field called "Log". Let's start with the calculated key called "Match":

Case(IsEmpty(Name) or IsEmpty(Address) or IsEmpty(City) or IsEmpty(State) or IsEmpty(Zip); Serial; Serial)

The Case statement includes every field, just like the "Current Changes" field so the calculation is triggered every time one of the fields is modified. This, in turn, triggers the lookup to locate the current record and grab the old information. That's right! The lookup occurs after the changes are made, allowing the unstored calculation field, "Current Changes", to grab both the new and old information.

FYI: Relationships weren't graphical before FileMaker 7 but I haven't been able to run FileMaker 6 on a modern operating system for years.

The lookup is also used to move the Current Changes into the Log text field, where it is stored and viewed by anyone who wants to see the changes. In summary, when a change to one of the specified data entry fields is modified and the field is exited, the lookup fires. The lookup the grabs what the current data is at the moment in the "Current Changes" field.

There's also some crazy stuff requiring the fields be created in a certain order via Manage Database (Define Field back then) in order for everything to work properly. You also needed a special script for creating a new record or the log wouldn't track changes until the record was committed at least once. Luckily, we don't have to do all this stuff today or we'd go batty. So, I think that's enough to give you a flavor for how it was to program back then so you can appreciate how easy it is today (relatively).

Finally, the Abstracted Log

Over the years, I've created a lot of versions of the single field log. As features were introduced, we could remove fields, relationships, lookups, etc. until the technique only required a single field and a single formula. I like to store this formula in a custom function so it can be accessed by any table in the solution. This centralizes the code so, if I have to make a change, I don't have hunt around for all the tables where I implemented a log.

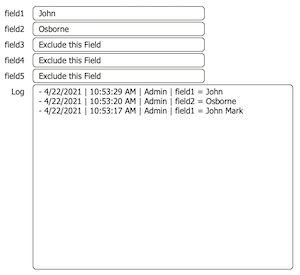

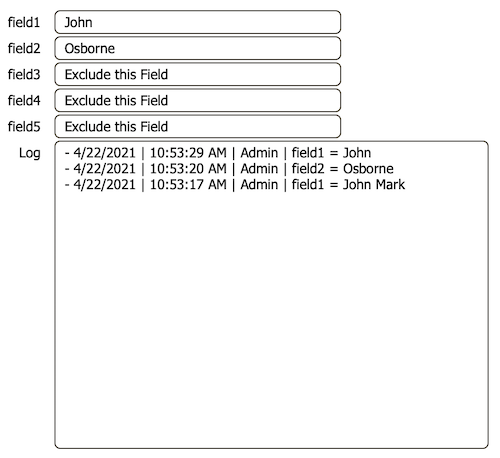

Here's the custom function, LogAbstract(TriggerFields; LogField):

"- " &

GetAsText(Get(CurrentDate)) & " | " &

GetAsText(Get(CurrentTime)) & " | " &

Get(AccountName) & " | " &

Get(ActiveFieldName) & " = " &

Get(ActiveFieldContents) &

¶ & LogField

This formula is so simple compared to the mud we had to wade through in the old days. It's like the top half of the old formula, simple asking for the date, time, account, field name and field contents. This is all concatenated to the current contents of the LogField. Since the Log field has an Auto-Enter calling this custom function and the option "do not replace existing value of field (if any)" unchecked, the changes get added to the top of the stack.

FYI: I've done away with the need to show what the data was originally and what it changed to for several reasons. First, the original data is already farther down in the log. Secondly, there's no need to lookup the current record to make the log field copy the unstored calculation contents since the Auto-Enter feature can handle the job without a relationship.

Here's the Auto-Enter formula attached to the Log field that calls the custom function:

LogAbstract(field1 & field2; Self)

Each field you want to track needs to be included in the first parameter, making it much easier to program. In other words, there's only one place to list the fields to be tracked, rather than two. The second parameter is always the Self function, allowing the custom function to identify the field logging the changes.

Honorable Mentions

Ray Cologon, of Nightwing Enterprises, has a wonderful version of this type of log, including a feature that archives the changes into a standard relational model where each change is a record. This helps with reporting and searching. Here's a direct link to the UltraLog 2.0.

Two Part Article

If you liked the content in this article, there will be a part two covering the proper methods for using Script Triggers to log changes into a separate table so searches and reports can be used more effectively.

Author:

John Mark Osborne

jmo@filemakerpros.com

www.databasepros.com

Example File (available to patrons at $5.00 per month): Download here if you are a patron

This blog is completely free. Please support it by clicking on one of the advertisers at the left side of the window or becoming a patron. Thanks so much!

|

|

Comments:

|

|

|

Mariusz Klecha

|

12/20/2021

|

|

|

Hi John, thanks for sharing. Nice job.

I'm just wondering why log is making two lines if I change the value in the related field.

It seems that there is some kind of delay or something ? If I edit the field in main Table is ok, but via relation, then 2 entries.

Regards

|

|

|

Ryan Hart

|

05/13/2021

|

|

|

Solid improvement to an old school technique.

|

|

|

Jeffrey Henry

|

05/12/2021

|

|

|

Awesome, can't wait to try this out. Thanks!

|

|

|

Daniel Wood

|

05/11/2021

|

|

|

hi John, great article thanks for sharing. Logging is something developers should be paying far more attention to.

I encourage you to check out my audit logging offering in the FileMaker sphere - it's called elemental_log. It's JSON based and packed with features.

One thing i noted in your implementation is usage of get(activeFieldName) and get(activeFieldContents).

This implies the logging is only going to work where a user directly interacts with a field on a layout rather than if that field is modified via other means such as script or data API, is this correct?

A way to think about this alternatively is rather than using these functions, investigate the usage of the Get ( ModifiedFields ) function instead. This will give you a list of fields that have been modified in the previous commit/create action. You can then evaluate these fields for their current (modified) contents. This is one of the methods I use in elemental_log.

Thanks for bringing logging to the forefront of peoples minds in the FM world.

|

|

|

|