|

|

|

|

Deleting Duplicates

|

Level: Beginner

Version: FileMaker 18

Category: Calculations

Tuesday, December 10, 2019 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Database administrators have nightmares about duplicate data entry. There are tons of solutions out there. Which direction do you go? Do you try to prevent duplicate record entry with validation or scripting? Do you let the duplicates get entered and delete the periodically? And, what about merging two records? Maybe it's not a nightmare but at least it's an issue you have to address before allowing users to enter data. This article will discuss the ins and outs of the various methods for deleting duplicates, followed by another article discussing how to prevent them from being entered in the first place.

The Exclamation Point!

Let's start with the basics. FileMaker provides a handy method to search for duplicates via the find mode exclamation point (!) operator. All you do is enter find mode, place an exclamation point (!) into a field and perform a search. If you have a lot of duplicates, identifying which duplicate to keep can be aided by sorting the database by the field that was searched.

It's a great little tool that is often misused. The issue is you can't use the exclamation point (!) in more than one field during a search. But, can one field really identify a duplicate record? Most likely, you will need two or three fields to accurately identify a duplicate record. The sad things is, the online FileMaker help regarding finding duplicate records never mentions it can't be used on more than one field. Hence, the misuse.

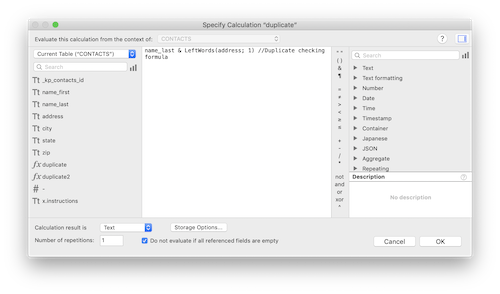

Okay, the online help does suggest using a calculation field but never says you will get erroneous results if the exclamation point (!) is used in more than one field. So, let's spend a little time discussing how a calculation can combine more than one field to identify a duplicate. Let's say you have a contacts table or some table containing people. You also probably have address information. A good way to create a formula is to use the last name and part of the address.

name_last & Leftwords(address; 1)

You might be thinking, why not use the first name and/or the entire address. The reason is typos! Data entry folks are likely to enter "Dr", "Drive", "Dr." or not even enter the type of street at all. However, they are likely to get the street number correct. The same is true for the first name field. You might enter "John", "Jon", "Johnathan", "Johnathen", "Johnny" or "Johnnie", not considering how important accurate data entry is for a database administrator.

This is only one example of a very common calculation for a contact manager. However, you might be devising a formula for a different table type. What you want to take away from this discussion is the concept behind designing a "de-duping" calculation rather than the exact steps. Sit down and think about what can accurately identify a duplicate in most situations in order to define an accurate duplicate calculation.

Don't forget to make that calculation a text result, rather than the default number result. It could ruin your day trying to figure out why the search is bringing up duplicates. The result in browse will look like it's correct but since there's a number result, the index won't work correctly when the search with the exclamation point (!) is performed.

Now, this calculation can be used for manual searching of your database by a database administrator. Add on a sort by the field you searched and a database administrator can manually remove small amounts of duplicate records. He can even manually merge two records before deletion if both records have important information. If there are lots of duplicate records or the process needs to be automated for users, then some other steps must be taken.

Refining the Formula

If you download the example file that comes with this article, you'll notice that a search on the exclamation point (!) in the calculation field provided above returns a bunch of records as duplicates. The first two are "John Osborne" and "John Mark Osborne" at "2066 Rancho Hills Drive". Even though these two records aren't exactly the same, the way the formula was designed considers these duplicates and rightly so. If the entire first name had been used in the formula, these two records would have gone undetected.

Another pair of duplicates are for "Marge Simpson" and "Homer Simpson" at the same address. Because we didn't include the first name field in the formula (for fear of spelling variations and nicknames) they have been flagged as the same record. This is where you need to refine the algorithm, when necessary. It all depends on your data and your testing of that data. If you decide you want a new formula, a good middle ground might be to use the first letter of the first name:

Left(name_first; 1) & name_last & Leftwords(address; 1)

This formula will differentiate between folks with the same last name and address but also account for most spelling variations and nicknames. It also correctly catches the different spellings in "John" and "John Mark". In other words, you really need to think about the formula and how it will react with your data before blindly offering the same code for every database.

Script It!

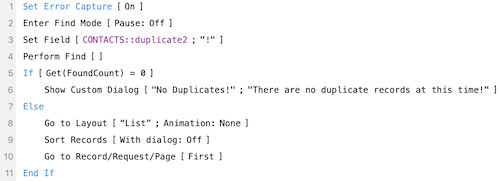

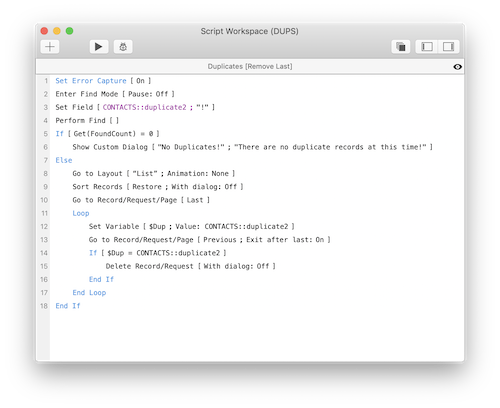

Offering a script that deletes duplicate records, to users, is like playing with fire. I prefer to guide users through the duplicate identification process and leave the deletion to the decision making prowess of the human mind. There's just too much opportunity for things to go wrong when deleting records is involved. Even with a script that doesn't delete records, I still think a lot of caution is required when allowing a user to delete records. I don't typically let people delete records since the ramifications for mistakes can be devastating. With that said, here's a simple script for identifying duplicate records:

FYI: The script above searches the second duplicate calculation we created in this article.

I prefer to spell out my script steps for readability rather than hide the find criteria in a single Perform Find step. Sure, it's longer but it's so much easier to understand what's going without clicking through several dialogs. You could add a comment but then you've added another line and also have to remember to update the comment if the script changes.

I also like to go to the first record after sorting since FileMaker stays on the record that was when the database was unsorted. After sorting, the list may be scrolled halfway down the window. Most people like to start at the top so it's an added convenience.

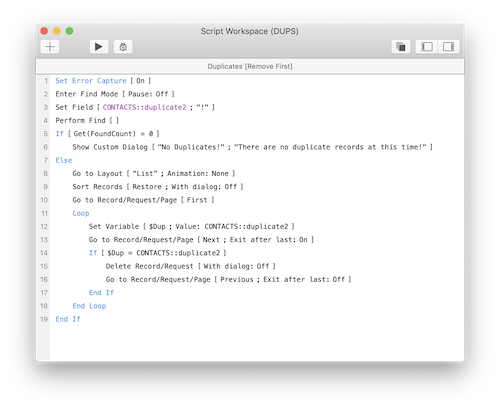

Taking it Further

I cover scripts for removing duplicates in my classes but it's more of an exercise on how to construct looping scripts rather than a viable solution. Again, I recommend duplicates be analyzed by a human before being deleted or merged. Of course, there are always exceptions such as could be the case with a new list of imported contacts that need to be de-duped. In this case, efficiency is of the utmost importance.

The first part of the script is exactly the same as what we've already covered so I'm going to skip lines 1 to 10 and just cover the looping construct. The idea is to compare adjacent records to each other to see if they have the same value in the duplicate checking calculation field. Because the records are sorted, like records are grouped together so the script can simply walk down the list and easily remove one or more duplicates. Just grab a value from the first record, place it in a variable, go to the next record and compare them. If record two is a duplicate of record one then delete it. Since FileMaker will select the next record after a delete, it's important to move back one record so the script check for a possible second duplicate.

TIP: It always helps in the learning process to turn on the Script Debugger and walk through the script slowly so you can see how it reacts.

Variations

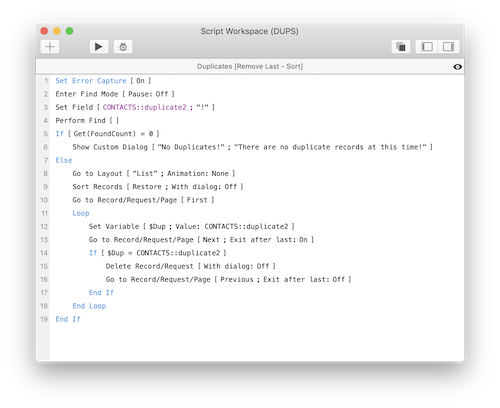

More than likely, the scripts above will function the way you desire, keeping the first of the duplicate records. The first usually has the most additional data on it and should remain as the sole survivor. As an aid to the learning process and just in case you want to keep the most recent duplicate record, here are some alternative scripts. Let's start with the one that has the fewest changes to the concept of the first script for deleting duplicates:

There are only three changes from the original script for removing duplicates. Two of those changes occur on lines 10 and 13. All that's happening is a reversal of the script from top-down to bottom-up by stepping through the records in reverse order. It's that simple! Also, the Go to Record/Request/Page step that selected the previous record after the duplicate was deleted has been removed. Since the script is going in the opposite direction, FileMaker selects the correct record after deletion.

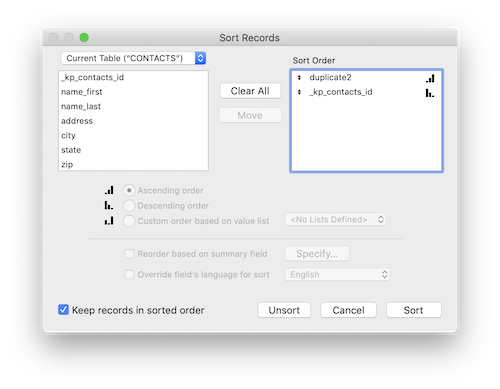

I like to take different looks at scripts to help in the learning process. You also never know when a different approach will be necessary. In the example below, the script simply sorts in reverse order. There are no other changes to the original script that deletes duplicates. You can use a serial number field or a creation date or timestamp, all of which should all be in every table you create anyhow. I have chosen a serial number field:

The only change to the original script for removing duplicates is to the Sort script step:

There's Always an Alternative

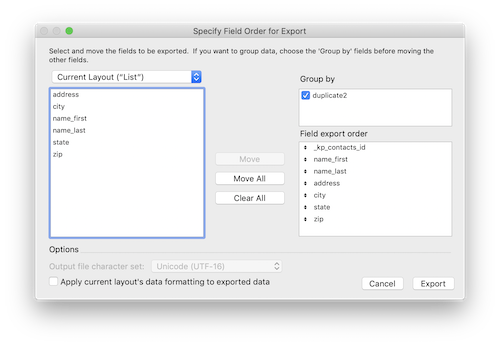

It's always a good idea to have a variety of techniques on your FileMaker tool belt. I like this technique because so few people understand this feature. It's an export option that is designed to export subsummarized data. In other words, it allows you to share a subsummary report with a program like Excel. It can also delete duplicates too!

The basic idea behind the "Group By" feature in the "Specify Field Order for Export" dialog is to export one record for each sorted group along with a summary field. It's the same concept as a subsummary report, just for export. Therefore, it's required for the database to be sorted before exporting. The sort field will appear in the export dialog and should be checked to export one record for each grouping rather than one row for every record.

Once you export the duplicate records, you can delete the originals and import the de-duped file. I recommend FileMaker as the export type but you may choose a different format (especially if your goal is simply to provide a clean list of contacts to someone else). The big problem with this approach is you might have to export a lot of fields if you have a large table.

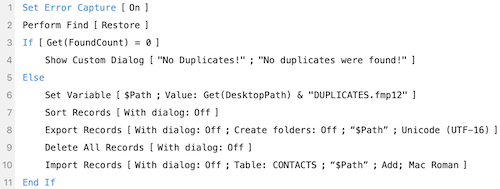

Automating the process is fairly straightforward, relying on a path stored in a variable so the export file can be imported. What I don't like, or am fearful of, is the Delete All Records step. There's just too much potential for the script to fail (usually by programming error) and delete all the records in your database. I generally don't script with the Delete All Records step except when necessary to avoid calamities. Regardless, here is the script that deletes duplicates by exporting and then importing.

My Thoughts

I provided quite a few examples for automating the deletion of duplicate records but have never actually implemented any client solutions containing any of these techniques. I do offer scripts to identify duplicates but not to automatically remove them. I prefer to prevent duplicate records from ever being entered in the first place. I'll cover these techniques in the second article in this series. For now, just be careful where you use the techniques covered in this article. Don't put them into the hands of users and don't let them run untethered. For example, I'd recommend a Show Custom Dialog step before the process of eliminating duplicates begins. The dialog could show how many duplicates are present so a human can decide if it sounds right or not. I guess I'm a worrywart but I've seen too many scenarios where folks have destroyed their data.

Author:

John Mark Osborne

jmo@filemakerpros.com

www.databasepros.com

Example File (available to patrons at $5.00 per month): Download here if you are a patron

This blog is completely free. Please support it by clicking on one of the advertisers at the left side of the window or becoming a patron. Thanks so much!

|

|